Container Networking

|

by Craig Miller

We have discussed Linux Containers in the past. There are many good reasons to run containers, such as file system isolation, ease of deployment, and fewer resources (e.g. less overhead).

In this session we'll discuss Containers from a networking aspect. Covering different Container frameworks, what are the advantages, and limitations of the frameworks and networking. We'll cover:

Container network attachments

All of these containers use Linux Network Name Spaces (NNS) to isolate the containers from the main host networking system. However not all register their namespaces with iproute2.

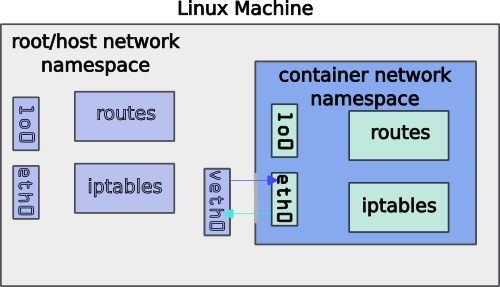

The key to Container networking, is that from inside the container, the network appears to be a normal eth0 interface with an IP address and a default route. But from the host side, one will see veth interfaces which are used to forward the packets from the container to the hosts interface.

Linux Namespaces

From the Man Page : A namespace wraps a global system resource in an abstraction that makes it appear to the processes within the namespace that they have their own isolated instance of the global resource. Changes to the global resource are visible to other processes that are members of the namespace, but are invisible to other processes. One use of namespaces is to implement containers.

Container environments use Namespaces to prevent containers from stepping on other containers network configurations. to view the namespaces configured on the host use the lsns command:

NS TYPE NPROCS PID USER COMMAND

4026531834 time 10 3768 cvmiller -ash

4026531835 cgroup 9 3768 cvmiller -ash

4026531836 pid 9 3768 cvmiller -ash

4026531837 user 3 3768 cvmiller -ash

4026531838 uts 9 3768 cvmiller -ash

4026531839 ipc 9 3768 cvmiller -ash

4026531840 net 7 3768 cvmiller -ash

4026531841 mnt 3 3768 cvmiller -ash

4026533669 user 7 4632 cvmiller catatonit -P

4026533670 mnt 5 4632 cvmiller catatonit -P

4026533671 net 3 4784 cvmiller rootlessport

4026533744 mnt 1 4811 cvmiller /whoami

4026533745 mnt 1 4780 cvmiller /usr/bin/slirp4netns --disable-host-loopback --mtu=65520 --enable-sandbox --enable-seccomp --enabl

4026533746 uts 1 4811 cvmiller /whoami

4026533747 ipc 1 4811 cvmiller /whoami

4026533748 pid 1 4811 cvmiller /whoami

4026533749 cgroup 1 4811 cvmiller /whoami

The Container Bridge

Docker uses the term bridge to describe what is really a proxy. In Classical networking a bridge is a Layer 2 device, that forwards packets based on destination MAC address. This is not how the Docker Bridge works.

Container bridges often include input/output port mapping, and even IP protocol conversion (IPv6<->IPv4).

The advantage/disadvantage of the Container framework bridge is that it creates isolation of the container from the network. For example, only certain TCP/UDP ports are forwarded into the Container.

General Types of Container Attachments

There are two general types of Container network attachments:

- With NAT

- Without NAT

Not surprisingly, NAT (Net Address Translation) has problems.

You can usually tell if NAT is involved by running the ip addr command on the host and looking at the names of the interfaces (see table below), but not always.

Another way to determine which containers are running is via the netstat -antp command (must be root for -p), and looking for the telltale proxy running.

sudo netstat -antp

...

tcp 0 0 :::8080 :::* LISTEN 4784/rootlessport

tcp 0 0 :::8888 :::* LISTEN 4932/docker-proxy

Limitations of NAT

I have spoke of the limitations of NAT in the past (NAT is evil). One of the biggest limitations in the world of Containers is that NAT limits the container to which port (think: TCP) it can listen to.

For example, if you have webservices app in a Container that listens on ports 80 and 443 with NAT, you can map those to the external host on ports 80 and 443. No problem, yet. But if you start a second container with webservices the second container can not listen to port 80 and 443, because the first container is already listening to those ports.

Of course, you could set the second container to listen to 8080 and 8443, but then you would need to communicate that to your user-base that you are using special ports for that service. And you would have to adjust your firewall rules to allow those secondary ports to be open.

As you add more containers, this problem becomes worse, and doesn't really scale, since all the containers share the same IP address of the Host.

Comparison of Containers

Here's a summary table of some of the container types, and their characteristics using the default configuration:

| FrameWork | NAT? | Host Interface | Version | IPv6 support |

|---|---|---|---|---|

| LXD | No | bridge=veth | 6.0.0 | Yes |

| Incus | No | macvlan=nothing | 5.0.2 | Yes |

| Docker | Yes | docker0 & veth | 26.1.2 | Port forwarding |

| Podman | Yes | nothing | 4.9.4 | Port forwarding |

IPv6 Support

All of the (above) containers support IPv6, however they take different strategies, which can be divided into three support mechanisms:

- Automatic: IPv6 is automatically configured by listening to the upstream router RA (router advertisement) and assigning a Global Unique IPv6 Address (GUA) to the container. Both LXD & Incus support this mechanism

- Proxy: the container may or may not support IPv6, but the proxy will open a listening socket on both IPv4 and IPv6. Both Docker & Podman use this mechanism

- Manual configuration: Just like in the 1990s, all IP configuration is static both inside the container, and the container network. This is daunting to do by hand, which is why the Docker (and Podman) community either:

- Rely on the proxy to handle IPv6 for them OR

- Use an orchestrator like Kubernetes to programmatically setup all the static addressing and routing

1. Hands On - Examining Container Networks

Log into Container Host and answer the following questions?

- host: edge

- login: pi

Attempt/Answer the following:

- What type of container system(s) is/are running?

- Can you see a web page from the container?

- Run NMAP against the host, what ports are open?

- Why is IPv6 useful with containers?

- Extra Credit: How many containers are running on the host?

Another look at Namespaces and Containers

Basic commands to see what containers have been instantiated and their PIDs in a given container frame work:

| Framework | show containers | show container PID |

|---|---|---|

| LXD | lxc ls |

lxc -ls -c n,p |

| Incus | incus ls |

incus ls -c n,p |

| Docker | docker ps -a |

docker top <container id> |

| Podman | podman ps -a |

podman ps -a --ns |

Looking at the network namespace for each container, using PID , and running the ip addr command from within that container's namespace:

sudo nsenter -t <PID> -n ip addr

Not all Containers are alike

As mentioned earlier, some container frameworks have excellent IPv6 support, allowing one to have several containers all listening on port 80 & 443, others less so. That said, Docker and Podman support Open Containers Initiative (OCI) Containers and Container Images, and therefore can run a wider range of containers. For example Podman can run Docker containers.

Unfortunately, LXD & Incus, do not support OCI container images, but use a simpler bundled compressed-tar-format, which can be created using distrobuilder.

Containers and Isolation

Many reasons why it is useful to run containers, and it is possible to run multiple container frameworks on one system. The Raspberry Pi 3b+ used in the lab is running three systems simultaneously!

So now that you know a little more about what is happening under the hood, freel free to go out and containerize your applications.

Notes:

Installation Links

Good namespaces tutorial on creating namespaces manually

It is possible to bind docker and podman containers to a single IPv6 address, and therefore run services on the same ports, but listening to different IPv6 addresses. For example, one can bind to the ULA and the GUA separately (assuming your GUA starts with 2001, and your ULA begins with fd10):

export IPADDR=$(ip a | grep 2001 | cut -d ' ' -f 6 | cut -d '/' -f 1)

podman run --name iampodman --rm -p "[$IPADDR]:8888:80" docker.io/containous/whoami

export IPADDR=$(ip a | grep 'fd10:.*288' | cut -d ' ' -f 6 | cut -d '/' -f 1)

docker run --name iamdocker --rm -p "[$IPADDR]:8888:80" containous/whoami

23 May 2024