Linux Containers with OpenWrt

by Craig Miller

|

In Linux Containers on the Pi I described how to run LXC/LXD on SBCs (Small Board Computers), including the Raspberry Pi.

Linux Containers Part 2

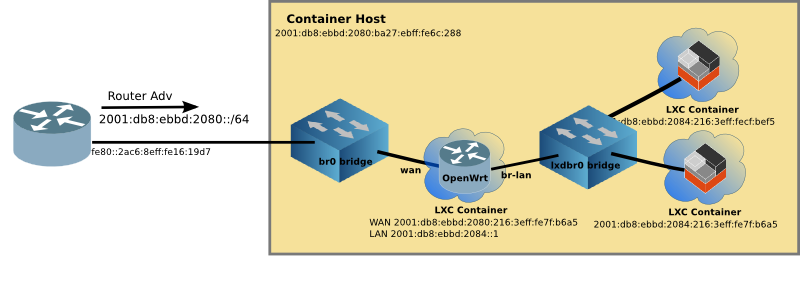

Although you can turn your Pi into an OpenWrt router, it never appealed to me since the Pi has so few (2) interfaces. But playing with LXD, and a transparent bridge access for the containers, it made sense that it might be useful. But after creating a server farm on a Raspberry Pi, I can see where there are those who would want to have a firewall in front of the servers to reduce the threat surface.

Docker attempts this, by fronting the containers with the dockerd daemon, but the networking is klugy at best. If you choose to go it on your own, and use Docker's routing, you will quickly find yourself in the 90s where everything must be manually configured (address range, gateway addresses, static routes to get into and out of the container network). The other option is to use NAT44 and NAT66, which is just wrong, and results in a losing true peer to peer connectivity, limited server access (since only 1 can be on port 80 or 443), and the other host of brokenness of NAT.

OpenWrt is, on the other hand, a widely used open-source router software project, running on hundreds of different routers. It includes excellent IPv6 support, including DHCPv6-PD (prefix delegation for automatic addressing of the container network, plus route insertion), an easy to use Firewall web interface, and full routing protocol support (such as RIPng or OSPF) if needed.

Going Virtual

The goal is to create a virtual environment which not only has excellent network management of LXC, but also an easy to use router/firewall via the OpenWrt web inteface (called LuCI), all running on the Raspberry Pi (or any Linux machine).

Motivation

OpenWrt project does an excellent job of creating images for hundreds of routers. I wanted to take a generic existing image and make it work on LXD without recompiling, or building OpenWrt from source.

Additionally, I wanted it to run on a Raspberry Pi (ARM processor). Most implementations of OpenWrt in virtual environments run on x86 machines.

If you would rather build OpenWrt, please see the github project https://github.com/mikma/lxd-openwrt (x86 support only)

Installing LXD on the Raspberry Pi

Unfortunately the default Raspian image does not support name spaces or cgroups which are used to isolate the Linux Containers. Fortunately, there is a Ubuntu 18.04 image available for the Pi which does.

UPDATE: Sept 2019: Raspian Buster now supports Linux Containers (LXD) by using snapd. To install LXD on Raspian:

sudo apt-get install snapd bridge-utils

sudo snap install core lxd

Add your userid to the lxd group, and run lxd init. Done!

If you haven't already installed LXD on your Raspberry Pi, please look at Linux Containers on the Pi blog post.

Creating a LXD Image

NOTE: Unless otherwise stated, all commands are run on the Raspberry Pi

Using lxc image import an image can pulled into LXD. The steps are:

- Download the OpenWrt rootfs tarball

- Create a metadata.yaml file, and place into a tar file

- Import the rootfs tarball and metadata tarball to create an image

Getting OpenWrt rootfs

The OpenWrt project not only provides squashfs and ext4 images, but also simple tar.gz files of the rootfs. The current release is 18.06.1, and I recommend starting with it.

The ARM-virt rootfs tarball can be found at OpenWrt

Download the OpenWrt 18.06.1 rootfs tarball for Arm.

The x86 rootfs is here

Create a metadata.yaml file

Although the yaml file can contain quite a bit of information the minimum requirement is architecture and creation_date. Use your favourite editor to create a file named metadata.yaml

architecture: "armhf"

creation_date: 1544922658

The creation date is the current time (in seconds) since the unix epoch (1 Jan 1970). Easiest way to get this value it to find it on the web, such as the EpochConverter

Once the metadata.yaml file is created, tar it up and name it anything that makes sense to you.

tar cvf openwrt-meta.tar metadata.yaml

Import the image into LXD

Place both tar files (metadata & rootfs) in the same directory on the Raspberry Pi. And use the following command to import the image:

lxc image import openwrt-meta.tar default-root.tar.gz --alias openwrt_armhf

Starting up Virtual OpenWrt

Unfortunately, the OpenWrt image won't boot with the imported image. So a helper script has been developed to create devices in /dev before OpenWrt will boot properly.

The steps to get your virtual OpenWrt up and running are (init.sh is not required if you are using OpenWrt 19.07 or later, omit steps 3-6):

- Create the container

- Adjust some of the parameters of the container

- Download

init.shscript from github - Copy the

init.shscript to/rooton the image - Log into the OpenWrt container and execute

sh init.sh - Validate that OpenWrt has completed booting

Create the OpenWrt Container

I use router as the name of the OpenWrt container

lxc init local:openwrt_armhf router

lxc config set router security.privileged true

In order for init.sh to run the mknod command the container must run as privileged.

Adjust some parameters for the OpenWrt container

Since this is going to be a router, it is useful to have two interfaces (for WAN & LAN), and therefore a profile for this network config must be created. Create the profile, and edit to match the config below (assuming you have br0 as a WAN and lxdbr0 as LAN).

lxc profile create twointf

lxc profile edit twointf

config: {}

description: 2 interfaces

devices:

eth0:

name: eth0

nictype: bridged

parent: lxdbr0

type: nic

eth1:

name: eth1

nictype: bridged

parent: br0

type: nic

root:

path: /

pool: default

type: disk

name: twointf

And then edit the router container to have 2 interfaces. The only line you need to add is the eth1 line, and be sure to have a unique MAC address (or just increment the eth0 MAC).

lxc config edit router

architecture: armv7l

config:

image.architecture: armhf

image.description: 'OpenWrt 18.06.1 from armvirt/32 '

image.os: openwrt

image.release: 18.06.1

...

volatile.eth0.hwaddr: 00:16:3e:72:44:b5

volatile.eth1.hwaddr: 00:16:3e:72:44:b6

...

Now assign the twointf profile to the router container, and remove the default profile (which only has one interface)

lxc profile assign router twointf

lxc profile remove router default

Download init.sh from the OpenWrt-LXD open source project

The init.sh script is open source and resides on github. To download it on your Pi, use curl (you may have to install curl)

curl https://raw.githubusercontent.com/cvmiller/openwrt-lxd/master/init.sh > init.sh

UPDATE: Sept 2019: OpenWrt release 19.07 no longer requires the use of init.sh. Skip down to Managing the Virtual OpenWrt router

Copy the init.sh to the OpenWrt container

In order to use the lxc push command the container must be running, so we'll start the router.

lxc start router

Then copy the `init.sh script to the container

lxc file push init.sh router/root/

Log into the OpenWrt container and execute the init.sh script

With the container started, the OpenWrt container boot will stall after running procd (think init in linux). By running init.sh the boot process will continue, and OpenWrt should be up and running.

Log into the router container using the lxc exec command, and run the init.sh script.

lxc exec router sh

#

# sh init.sh

Validating OpenWrt is up and running

You can see if OpenWrt is up and running by looking at the processes. An unhappy container will only have three. A happy container will have about 12. Type ps inside the container should look like this:

~ # ps

PID USER VSZ STAT COMMAND

1 root 1324 S /sbin/procd

78 root 1064 S sh

107 root 1000 S /sbin/ubusd

196 root 1016 S /sbin/logd -S 64

213 root 1328 S /sbin/rpcd

322 root 1512 S /sbin/netifd

357 root 1228 S /usr/sbin/odhcpd

409 root 828 S /usr/sbin/dropbear -F -P /var/run/dropbear.1.pid -p 22 -K 300 -T 3

467 root 820 S odhcp6c -s /lib/netifd/dhcpv6.script -Ntry -P0 -t120 eth1

469 root 1064 S udhcpc -p /var/run/udhcpc-eth1.pid -s /lib/netifd/dhcp.script -f -t 0 -i eth1 -x hostname:router

508 root 1116 S /usr/sbin/uhttpd -f -h /www -r OpenWrt -x /cgi-bin -t 60 -T 30 -k 20 -A 1 -n 3 -N 100 -R -p 0.0.

850 dnsmasq 1152 S /usr/sbin/dnsmasq -C /var/etc/dnsmasq.conf.cfg01411c -k -x /var/run/dnsmasq/dnsmasq.cfg01411c.pi

1191 root 1064 R ps

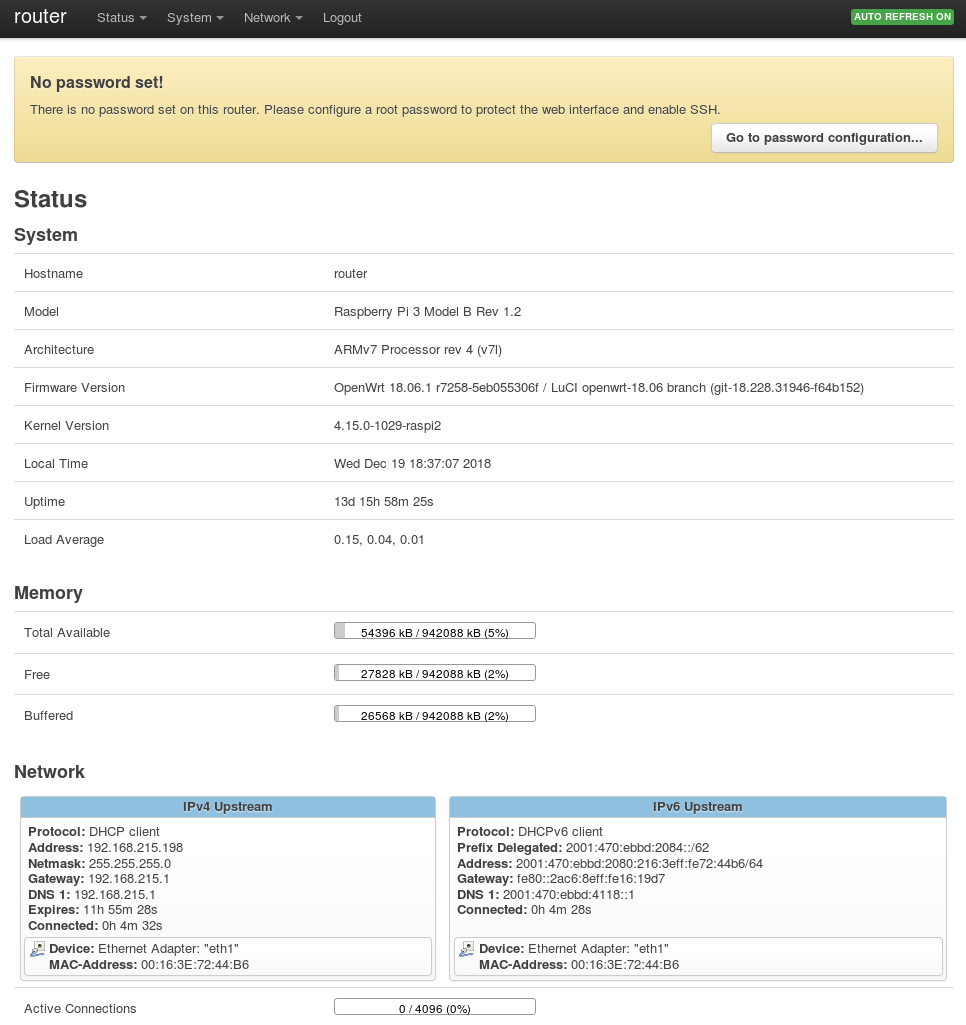

Additionally, if you have connected the router up the right way (e.g. WAN=eth1/br0 LAN=eth0,lxdbr0) then the WAN and LAN should have addresses. Use the ip addr to view them. (note the ip address of the WAN interface for management later)

Managing the Virtual OpenWrt router

The LuCI web interface by default is blocked on the WAN interface. In order to manage the router from the outside, a firewall rule allowing web access from the WAN must be inserted.

The standard way it to add the following to bottom of the /etc/config/firewall file within the OpenWrt container.

lxc exec router sh

# vi /etc/config/firewall

...

config rule

option target 'ACCEPT'

option src 'wan'

option proto 'tcp'

option dest_port '80'

option name 'ext_web'

Save the file and then restart the firewall within the OpenWrt container.

/etc/init.d/firewall restart

Now you should be able to point your web browser to the WAN address (see output of ip addr eth1 address). and login, password is blank.

http://[2001:db8:ebbd:2080::93b]/

Follow the instructions to set a password, and configure the firewall as you like.

Step back and admire work

Type exit to return to the Raspberry Pi prompt. By looking at some lxc output, we can see the virtual network up and running.

$ lxc ls

+---------+---------+------------------------+-----------------------------------------------+------------+-----------+

| NAME | STATE | IPV4 | IPV6 | TYPE | SNAPSHOTS |

+---------+---------+------------------------+-----------------------------------------------+------------+-----------+

| docker1 | RUNNING | 192.168.215.220 (eth0) | fd6a:c19d:b07:2080:216:3eff:fe58:1ac9 (eth0) | PERSISTENT | 0 |

| | | 172.17.0.1 (docker0) | fd4b:7e4:111:0:216:3eff:fe58:1ac9 (eth0) | | |

| | | | 2001:db8:ebbd:2080:216:3eff:fe58:1ac9 (eth0) | | |

+---------+---------+------------------------+-----------------------------------------------+------------+-----------+

| router | RUNNING | 192.168.215.198 (eth1) | fd6a:c19d:b07:2084::1 (br-lan) | PERSISTENT | 1 |

| | | 192.168.181.1 (br-lan) | fd6a:c19d:b07:2080::8d1 (eth1) | | |

| | | | fd6a:c19d:b07:2080:216:3eff:fe72:44b6 (eth1) | | |

| | | | fd4b:7e4:111::1 (br-lan) | | |

| | | | fd4b:7e4:111:0:216:3eff:fe72:44b6 (eth1) | | |

| | | | 2001:db8:ebbd:2084::1 (br-lan) | | |

| | | | 2001:db8:ebbd:2080::8d1 (eth1) | | |

| | | | 2001:db8:ebbd:2080:216:3eff:fe72:44b6 (eth1) | | |

+---------+---------+------------------------+-----------------------------------------------+------------+-----------+

| www | RUNNING | 192.168.181.158 (eth0) | fd6a:c19d:b07:2084:216:3eff:fe01:e0a3 (eth0) | PERSISTENT | 0 |

| | | | fd4b:7e4:111:0:216:3eff:fe01:e0a3 (eth0) | | |

| | | | fd42:dc68:dae9:28e9:216:3eff:fe01:e0a3 (eth0) | | |

| | | | 2001:db8:ebbd:2084:216:3eff:fe01:e0a3 (eth0) | | |

+---------+---------+------------------------+-----------------------------------------------+------------+-----------+

The docker1 container is still running from Part 1, and still connected to the outside network br0. You can see this by the addressing assigned (both v4 and v6).

The router container (which is running OpenWrt) has both eth1 (aka WAN) and br-lan (aka LAN) interfaces. The br-lan interface is connected to the inside lxdbr0 virtual network. And OpenWrt routes between the two networks.

Lastly the www container is just another instantiation of the web container (created in Part 1), but connected to the inside network. It was started with the following command:

lxc launch -p default local:web_image www

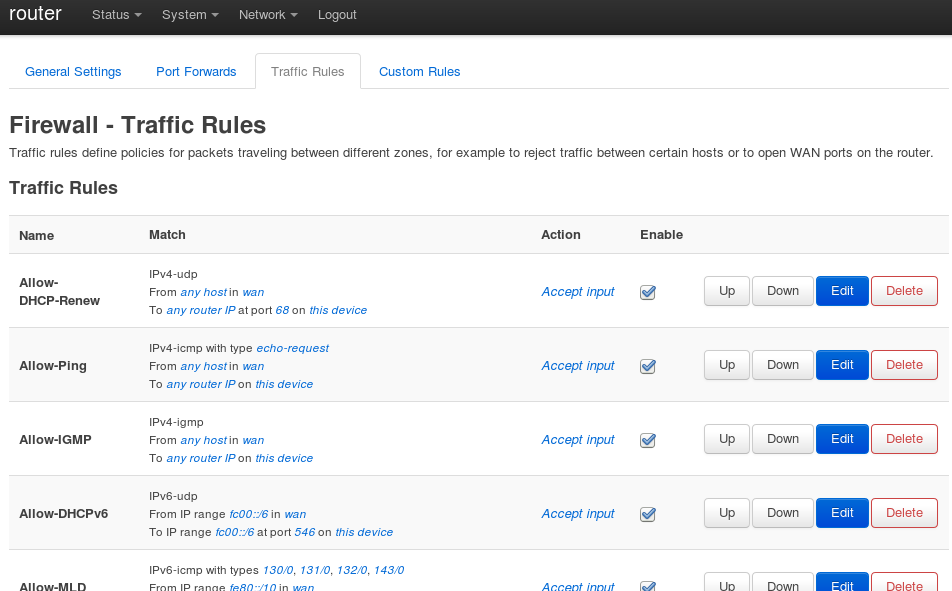

Managing the OpenWrt Firewall

In order to permit access to webservers, a firewall rule must allow the traffic.

Example 1: Create lots of webservers

After creating two, I wrote a little shell script to create the rest, called start_webs.sh

#!/bin/bash

MAX=10

HTMLFILE="/var/www/localhost/htdocs/index.html"

for i in $(seq 3 $MAX)

do

echo "starting web container: $i"

free -h

lxc launch -p default local:web_image w$i

# update webserver home page

lxc exec w$i -- sed -i "s/Container/Container w$i/" $HTMLFILE

done

# show the containers

lxc ls

Managing the OpenWrt Firewall

In order to permit access to webservers, a firewall rule must allow the traffic. Add a new rule to allow port 80 traffic to pass to any host on the inside network (the lxdbr0 bridge).

Now point your browser at one (or more) of the webservers to see the content.

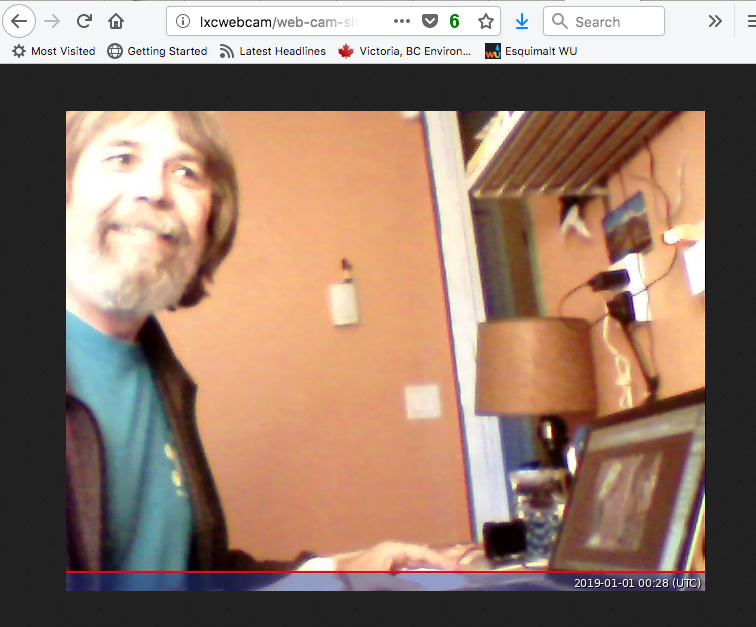

Example 2: Running a webcam in a container

This is an example from a previous VicPiMakers.ca talk, but now running inside a container.

Earlier, I created a container based on Debian 10 (Buster), the next version of Debian. Since we are running the webcam inside a container, the webcam device (/dev/video0) must be mapped into the container.

lxc launch images:debian/10 webcam

# map webcam into container with 'audio' GID

lxc config device add webcam video0 unix-char path=/dev/video0 gid=29

# install apps to run webcam

lxc exec webcam bash

apt-get install fswebcam curl python3 openssh-server sudo

Once that is done, step inside the container, and install the software we need to run the webcam and the IPv6 enabled python webserver. This takes a while because of the dependencies.

Now start fswebcam which will take a photo every 5 seconds, and -b places the program in background.

Then start the Python webserver:

# start webcam taking photos every 5 seconds

$ fswebcam -r 640x480 --jpeg 85 -S 10 -l 5 -b web-cam-shot.jpg

# start the personal webserver

$ ~/bin/ipv6-httpd.py

Listening on port:8080

Point your web browser to the webcam container on port 8080, and see the files, click on web-cam-shot.jpg. Note: the firewall will require a rule for port 8080 as well.

As you can see the firewall prevents unauthorized probing of the inside network.

Limitations of Virtual OpenWrt

There are some limitations of the virtual OpenWrt. Please see the github project for the most current list. Most notably, ssh although it works, needs improving (fixed with OpenWrt 19.07).

Address Stability

Because all of this is running on LXC, there is address stability. Not matter how many times you reboot the Raspberry Pi, or restart containers in different order, the addresses remain the same. This means the addresses above can be entered into your DNS server with out churn. Something Docker doesn't provide.

Running a Virtual Network

LXC is the best at container customization, and virtual networking (IPv4 and IPv6). With LXCs flexibility, it is easy to create templates to scale up multiple applications (e.g. a webserver farm running in the palm of your hand). OpenWrt is one of the best Open source router projects, and now it can be run virtually as well. Now you have a server farm in the palm of your hand, with excellent IPv6 support and a firewall! Perhaps the Docker folks will take note.

3 Jan 2019

23 Sept 2019, Updated 14 Jan 2020

Palm Photo by Alie Koshes